We are Digital

Joining Orange Business, within the Digital Services business line, means joining a company that fosters innovation and knowledge sharing, enabling you to grow into a true expert.

Join our team of Data Artists, Cloud Gurus, and Digital Workspace Heroes across Europe, working together in a flexible, agile and dynamic environment.

We are all about exploring fresh and smart ideas, learning from our experiences and mistakes, and thinking outside the box to push industries' boundaries. We believe in the power of people, uniting diverse skills and minds to help everyone reach their full potential together.

Join us for an exciting journey where you'll not only excel in your field but also be part of a supportive and dynamic community dedicated to your success.

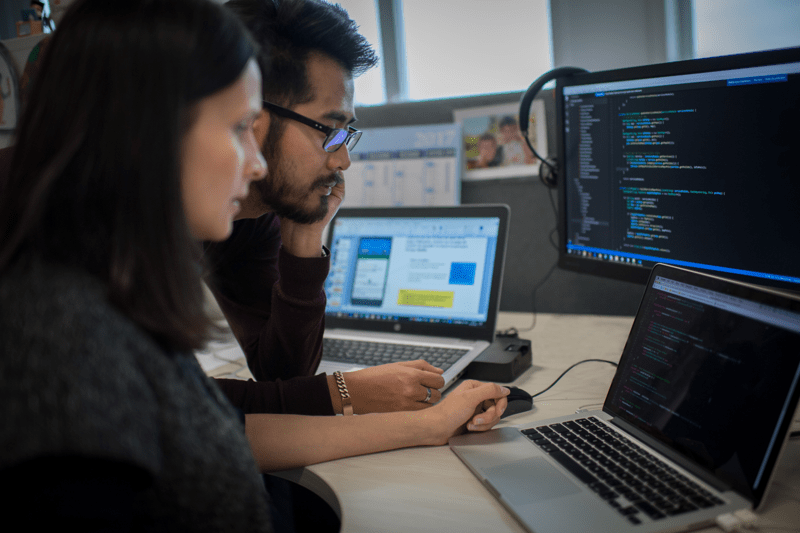

Areas of expertise

Key figures

Countries present in

Offices

Employees

Current job openings

-

Data Gouvernance - Data Architect - Data ModelerData & AI · Geneva · Hybrid Remote

Data Gouvernance - Data Architect - Data ModelerData & AI · Geneva · Hybrid Remote -

Senior Controller Team Lead (f/m/d) in BerlinBusiness Partnering & Sales · Berlin · Hybrid Remote

Senior Controller Team Lead (f/m/d) in BerlinBusiness Partnering & Sales · Berlin · Hybrid Remote -

Data Analyse & Visualisation ConsultantData & AI · Geneva · Hybrid Remote

Data Analyse & Visualisation ConsultantData & AI · Geneva · Hybrid Remote -

Head of Finance (f/m/d) in BerlinBusiness Partnering & Sales · Berlin · Hybrid Remote

Head of Finance (f/m/d) in BerlinBusiness Partnering & Sales · Berlin · Hybrid Remote -

Director Finance (f/m/d) in BerlinBusiness Partnering & Sales · Berlin · Hybrid Remote

Director Finance (f/m/d) in BerlinBusiness Partnering & Sales · Berlin · Hybrid Remote -

Young Graduate Program Data Consultant (fluent ...Data & AI · Brussels · Hybrid Remote

Young Graduate Program Data Consultant (fluent ...Data & AI · Brussels · Hybrid Remote -

Graduate Program Customer Experience (CX) Fluen...Customer Experience · Brussels · Hybrid Remote

Graduate Program Customer Experience (CX) Fluen...Customer Experience · Brussels · Hybrid Remote -

Business Area Manager - Data & AIData & AI · Stockholm · Hybrid Remote

Business Area Manager - Data & AIData & AI · Stockholm · Hybrid Remote -

Data Protection Security ConsultantData & AI · Brussels · Hybrid Remote

Data Protection Security ConsultantData & AI · Brussels · Hybrid Remote

Our offices and hybrid & remote ways of working

On this career site you will find opportunities in the Nordics, Netherlands, Germany, Austria, Belgium, Luxembourg, Switzerland & Spain.

For most positions we offer hybrid ways of working where you will both work from one of our offices and work from home.

Do you not live close to one of our offices? Then it's worth to check for our fully remote (within the country of employment) positions.

Our history

1992

Business & Decision was founded

2000

Basefarm was founded

2002

Login Consultants was founded

2008

The Unbelievable Machine Company was founded

2016

Orange Business acquired Login Consultants

2018

Orange Business acquired Business & Decision and the Basefarm Group

2022

The Basefarm group rebranded to Orange Business

2023

Business & Decision rebranded to Orange Business

2023

Orange Business, Digital Services business line was created

More about us:

We celebrate individuality and encourage everyone to be their authentic selves. We are committed to fostering an inclusive workplace culture that values unique talents without any form of prejudice or bias.

Have a look at our #WeAreDigital page here to learn more about our people and activities at the different locations.

If you would like to get more insight into life at Orange Business, have a look at our other channels and follow us there to not miss any updates!

Already working at Orange Business ?

Let’s recruit together and find your next colleague.